Introduction

The goal of this challenge is to recognize objects from a number of visual object classes in realistic scenes (i.e. not pre-segmented objects). It is fundamentally a supervised learning learning problem in that a training set of labelled images is provided. The twenty object classes that have been selected are:

- Person: person

- Animal: bird, cat, cow, dog, horse, sheep

- Vehicle: aeroplane, bicycle, boat, bus, car, motorbike, train

- Indoor: bottle, chair, dining table, potted plant, sofa, tv/monitor

There will be two main competitions, and two smaller scale “taster” competitions.

Main Competitions

- Classification: For each of the twenty classes, predicting presence/absence of an example of that class in the test image.

- Detection: Predicting the bounding box and label of each object from the twenty target classes in the test image.

20 classes

Participants may enter either (or both) of these competitions, and can choose to tackle any (or all) of the twenty object classes. The challenge allows for two approaches to each of the competitions:

- Participants may use systems built or trained using any methods or data excluding the provided test sets.

- Systems are to be built or trained using only the provided training/validation data.

The intention in the first case is to establish just what level of success can currently be achieved on these problems and by what method; in the second case the intention is to establish which method is most successful given a specified training set.

Taster Competitions

- Segmentation: Generating pixel-wise segmentations giving the class of the object visible at each pixel, or “background” otherwise.

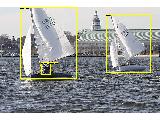

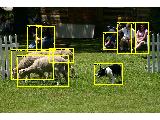

Image Objects Class

- Person Layout: Predicting the bounding box and label of each part of a person (head, hands, feet).

Image Person Layout

Participants may enter either (or both) of these competitions.

The VOC2007 challenge has been organized following the successful VOC2006 and VOC2005 challenges. Compared to VOC2006 we have increased the number of classes from 10 to 20, and added the taster challenges. These tasters have been introduced to sample the interest in segmentation and layout.

Data

The training data provided consists of a set of images; each image has an annotation file giving a bounding box and object class label for each object in one of the twenty classes present in the image. Note that multiple objects from multiple classes may be present in the same image. Some example images can be viewed online.

Annotation was performed according to a set of guidelines distributed to all annotators. These guidelines can be viewed here.

The data will be made available in two stages; in the first stage, a development kit will be released consisting of training and validation data, plus evaluation software (written in MATLAB). One purpose of the validation set is to demonstrate how the evaluation software works ahead of the competition submission.

In the second stage, the test set will be made available for the actual competition. As in the VOC2006 challenge, no ground truth for the test data will be released until after the challenge is complete.

The data has been split into 50% for training/validation and 50% for testing. The distributions of images and objects by class are approximately equal across the training/validation and test sets. In total there are 9,963 images, containing 24,640 annotated objects. Further statistics can be found here.

Example images

Example images and the corresponding annotation for the main classification/detection tasks, segmentation and layout tasters can be viewed online:

- Classification/detection example images

- Segmentation taster example images

- Person Layout taster example images

Database Rights

The VOC2007 data includes some images provided by “flickr”. Use of these images must respect the corresponding terms of use:

For the purposes of the challenge, the identity of the images in the database, e.g. source and name of owner, has been obscured. Details of the contributor of each image can be found in the annotation to be included in the final release of the data, after completion of the challenge. Any queries about the use or ownership of the data should be addressed to the organizers.

Development Kit

The development kit provided for the VOC challenge 2007 is available. You can:

- Download the training/validation data (450MB tar file)

- Download the development kit code and documentation (250KB tar file)

- Download the PDF documentation (120KB PDF)

- Browse the HTML documentation

- View the guidelines used for annotating the database

The updated development kit made available 11-Jun-2007 contains two changes:

- Bug fix: There was an error in the VOCevaldet function affecting the accuracy of precision/recall curve calculation. Please download the update to correct.

- Changes to the location of results files to support running on both VOC2007 and VOC2006 test sets, see below.

It should be possible to untar the updated development kit over the previous version with no adverse effects.

Test Data

The annotated test data for the VOC challenge 2007 is now available:

- Download the annotated test data (430MB tar file)

- Download the annotation only (12MB tar file, no images)

This is a direct replacement for that provided for the challenge but additionally includes full annotation of each test image, and segmentation ground truth for the segmentation taster images. The annotated test data additionally contains information about the owner of each image as provided by flickr. An updated version of the training/validation data also containing ownership information is available in the development kit.

Results

Detailed results of all submitted methods are now online. For summarized results and information about some of the best-performing methods, please see the workshop presentations:

Citation

If you make use of the VOC2007 data, please cite the following reference in any publications:

@misc{pascal-voc-2007,

author = "Everingham, M. and Van~Gool, L. and Williams, C. K. I. and Winn, J. and Zisserman, A.",

title = "The {PASCAL} {V}isual {O}bject {C}lasses {C}hallenge 2007 {(VOC2007)} {R}esults",

howpublished = "http://www.pascal-network.org/challenges/VOC/voc2007/workshop/index.html"}

Timetable

- 7 April 2007 : Development kit (training and validation data plus evaluation software) made available.

- 11 June 2007: Test set made available.

- 24 September 2007, 11pm GMT: DEADLINE (extended) for submission of results.

- 15 October 2007: Visual Recognition Challenge workshop (Caltech 256 and PASCAL VOC2007) to be held as part of ICCV 2007 in Rio de Janeiro, Brazil.

Submission of Results

Participants are expected to submit a single set of results per method employed. Participants who have investigated several algorithms may submit one result per method. Changes in algorithm parameters do not constitute a different method – all parameter tuning must be conducted using the training and validation data alone.

Details of the required file formats for submitted results can be found in the development kit documentation.

The results files should be collected in a single archive file (tar/zip) and placed on an FTP/HTTP server accessible from outside your institution. Email the URL and any details needed to access the file to Mark Everingham, me@comp.leeds.ac.uk. Please do not send large files (>1MB) directly by email.

Participants submitting results for several different methods (noting the definition of different methods above) may either collect results for each method into a single archive, providing separate directories for each method and an appropriate key to the results, or may submit several archive files.

In addition to the results files, participants should provide contact details, a list of contributors and a brief description of the method used, see below. This information may be sent by email or included in the results archive file.

- URL of results archive file and instructions on how to access.

- Contact details: full name, affiliation and email.

- Details of any contributors to the submission.

- A brief description of the method. LaTeX+BibTeX is preferred. Participants should provide a short self-contained description of the approach used, and may provide references to published work on the method, where available.

For participants using the provided development kit, all results are stored in the results/ directory. An archive suitable for submission can be generated using e.g.:

tar cvf markeveringham_results.tar results/

Participants not making use of the development kit must follow the specification for contents and naming of results files given in the development kit. Example files in the correct format may be generated by running the example implementations in the development kit.

Running on VOC2006 test data

If at all possible, participants are requested to submit results for both the VOC2007 and VOC2006 test sets provided in the test data, to allow comparison of results across the years.

The updated development kit provides a switch to select between test sets. Results are placed in two directories, results/VOC2006/ or results/VOC2007/ according to the test set.

Publication Policy

The main mechanism for dissemination of the results will be the challenge webpage. Further details will be made available at a later date.

Organizers

- Mark Everingham (Leeds), me@comp.leeds.ac.uk

- Luc van Gool (Zurich)

- Chris Williams (Edinburgh)

- John Winn (MSR Cambridge)

- Andrew Zisserman (Oxford)

Acknowledgements

We gratefully acknowledge the following, who spent many long hours providing annotation for the VOC2007 database: Moray Allan, Patrick Buehler, Terry Herbert, Anitha Kannan, Julia Lasserre, Alain Lehmann, Mukta Prasad, Till Quack, John Quinn, Florian Schroff. We are also grateful to James Philbin, Ondra Chum, and Felix Agakov for additional assistance.